Public cloud infrastructures and microservices are pushing the limits of resources and service delivery beyond what was imaginable until very recently. In order to keep up with the demand, network infrastructures and network technologies had to evolve as well. Software-defined networking (SDN) is the pinnacle of advancement in cloud networking; by using SDN, developers can now deliver an optimized, flexible networking experience that can adapt to the growing demands of their clients.

This article will discuss how Tigera’s new Vector Packet Processing (VPP) data plane fits into this landscape and share some benchmark details about its performance. Then it will demonstrate how to run a VPP-equipped cluster using AWS public cloud and secure it with Internet Protocol Security (IPsec).

Introduction to Vector Packet Processing

Project Calico is an open-source networking and security solution. Although it focuses on securing Kubernetes networking, Calico can also be used with OpenStack and other workloads. Calico uses a modular data plane that allows a flexible approach to networking, providing a solution for both current and future networking needs.

VPP is an easily extensible, kernel-independent, highly optimised, and blazing-fast open-source data plane project that operates between layer 2 and layer 4 of the OSI model.

Initially, VPP starts a user space networking stack process to handle network traffic. This allows VPP to consume limited resources in comparison to general purpose data planes while still offering excellent visibility and performance.

How does VPP work?

VPP uses a number of techniques in order to treat packets as quickly as possible. Optimization and caching are arguably the most important techniques.

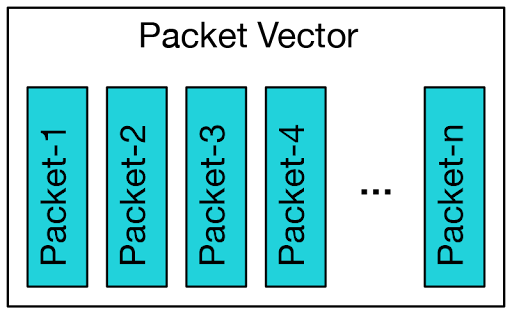

Initially, VPP grabs the largest available block of packets from input nodes to form a packet vector. A packet vector is a simple form of grouping for packets that are similar.

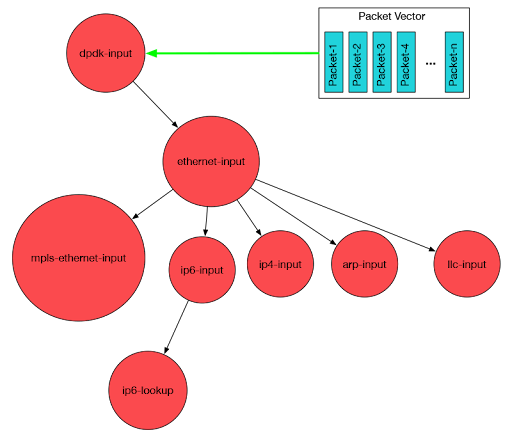

Next, VPP processes the vector of packets through a packet processing graph.

VPP then processes the entire vector of packets through a graph node before moving to the next one. The first packet processed from each vector creates a cache that is used to treat the subsequent ones. If VPP is faced with an error during the packet-processing procedure, it will try to form a bigger vector packet next time to compensate for the loss. This allows it to maintain a stable throughput and latency.

Baking cupcakes can be a simple analogy to explain VPP. Imagine we have a large number of cupcakes of varying size and type ready to bake. In order to start baking, we could set the temperature of the oven, put a cupcake into the oven and wait for it to be ready, then turn off the oven, take out the cupcake and, finally, reset the oven temperature for the next cupcake.

Instead of doing this procedure one by one for each cupcake, we can use a smarter method and group them by their cooking requirements. This would include setting the oven to the required temperature for each group, putting each group into the oven until it’s done, then repeating for the remaining groups.

Since we are grouping cupcakes together, when our timer goes off, we will end up with many cupcakes instead of one, which can greatly decrease the overall time we have to spend baking.

Calico integration

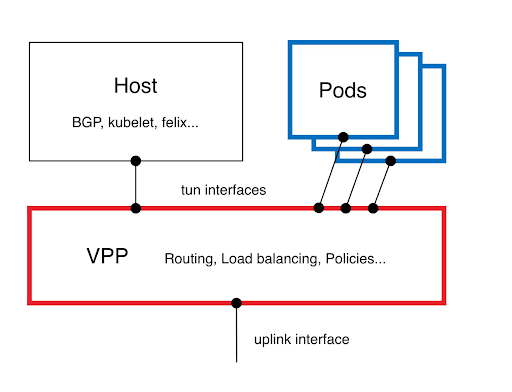

Calico’s VPP implementation is a pure layer 3 data plane. Pods send their traffic to VPP pods using TUN interfaces. VPP processes the traffic and makes routing, load balancing and policy decisions.

Such a design allows users to leverage VPP capabilities without any modification to their application.

Performance

Benchmarks are an ideal way to determine if a technology can live up to its promises, since numbers and metrics generated by benchmarks are easily comparable. Although these performance numbers might differ in different environments, the end result should be similar in most cases.

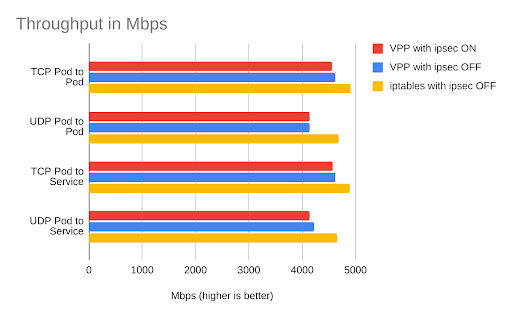

The following benchmark shows how VPP can push data at almost line rate for both encrypted and unencrypted traffic. Note that AWS has a limit on single flows of traffic between EC2 instances that restricts this type of traffic to 5Gbps. If your scenarios require more than 5Gbps throughput, make sure to change Calico encapsulation to VXLAN mode so you can bypass this limitation.

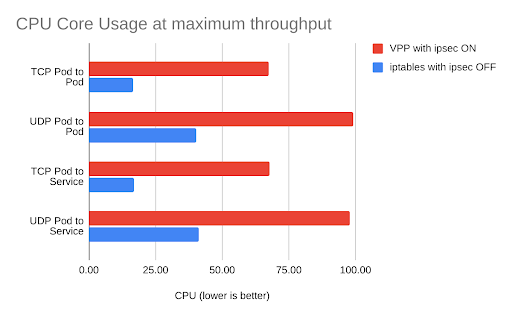

The next chart compares CPU utilization between the VPP and iptables data planes. Due to an issue caused by DPDK driver implementation in AWS, VPP is using poll mode, which uses a single core CPU even when there is no traffic available (which results in these high numbers). These numbers will eventually drop lower since there is an active effort by the AWS team to solve this problem. More information can be found here.

Demo

Before we begin

In this demo, we will set up an EKS cluster in Amazon’s public cloud infrastructure, and integrate it with the Calico VPP data plane. There are a few prerequisite tools you will need to follow these steps. Please make sure you have kubectl, eksctl and awscli installed and configured on your system.

Install awscli

Install eksctl

Install kubectl

Cluster setup

Using eksctl, populate an EKS cluster.

eksctl create cluster --name calico-vpp-preview --without-nodegroup

AWS EKS control-plane node comes with a preinstalled version of amazon-vpc-cni-k8s. Before we can install the Calico VPP manifest, we need to remove the amazon-vpc-cni-k8s from our cluster.

Use the following command to remove the default amazon-vpc-cni-k8s DaemonSet.

kubectl delete daemonset -n kube-system aws-node

Use the following manifest to install Calico and VPP pods.

kubectl apply -f http://raw.githubusercontent.com/projectcalico/vpp-dataplane/master/yaml/generated/calico-vpp-eks-dpdk.yaml

Now that we have installed Calico and VPP data plane in our EKS cluster by using the previous manifest, it is time to add worker nodes to our cluster. Each worker node will host application pods that will service our clients.

To do this, add nodes to the cluster.

eksctl create nodegroup --cluster calico-vpp-preview --node-type t3.medium --node-ami auto --max-pods-per-node 100

That’s it, we have an EKS cluster equipped with Calico utilizing VPP data plane!

IPsec setup

IPsec is a suite of protocols that allows users to establish secure communication by utilizing encrypted tunnels. In a normal setup, IPsec will take a huge toll on the amount of data that can be transferred via available links; this leads to a massive waste of the available bandwidth.

VPP’s speed boost can help IPsec utilize all the available bandwidth without any changes to application or IPsec source code.

Use the following command to create a pre-shared key.

kubectl -n calico-vpp-dataplane create secret generic calicovpp-ipsec-secret \ --from-literal=psk="$(dd if=/dev/urandom bs=1 count=36 2>/dev/null | base64)"

Now that we have configured our pre-shared key, it is time to enable secure tunnels.

Use the following command to turn on IPsec tunnels.

kubectl -n calico-vpp-dataplane patch daemonset calico-vpp-node --patch "$(curl http://raw.githubusercontent.com/projectcalico/vpp-dataplane/master/yaml/patches/ipsec.yaml)"

Verification

VPP userspace offers a set of interactive commands to monitor and change data plane settings. Calico VPP data plane project offers a command line utility to provide an easy way for users to interact with the VPP data plane utilizing userspace interactive commands.

Note: The following command line utility requires bash and might not run in Windows without a bash emulator.

Use the following command to download the utility.

curl -o calivppctl http://raw.githubusercontent.com/projectcalico/vpp-dataplane/v0.14.0-calicov3.19.0/test/scripts/vppdev.sh chmod +x calivppctl

By using the bash script with the vppctl option and providing the name of the worker node, we can interact with the VPP interactive session to gather information about our VPP deployment and network status.

#calivppctl vppctl ip-192-168-12-227.us-east-2.compute.internal

The following command shows the IPsec tunnels established in the previous step.

vpp# show ipsec tunnel ipip0 flags:[none] output-sa: [0] sa 2147483648 (0x80000000) spi 3658219910 (0xda0c0186) protocol:esp flags:[esn anti-replay aead ctr ] input-sa: [1] sa 3221225472 (0xc0000000) spi 3207639915 (0xbf30b36b) protocol:esp flags:[esn anti-replay inbound aead ctr ]

If you would like to know more about vppctl commands, please visit this link.

Cleanup

Use the following command to clean up the resources created during this demo.

eksctl delete cluster calico-vpp-preview

Conclusion

VPP’s unique design shows great potential for accelerating cloud computing network performance where traditional data planes might not meet the ever-growing demand of microservices.

In this article, we explored how Calico’s pluggable data plane design offers an easy way to integrate VPP into your Kubernetes cluster, without the need to modify your applications. We also looked at why it can be a viable alternative data plane for your Kubernetes cluster if you require a boost in network performance.

Since Calico VPP is currently in the tech preview phase, your feedback can play a huge role in its future. Please use our Slack channel to connect with the developers behind the VPP project or to share your ideas for the future of VPP with Project Calico.

Did you know you can become a certified Calico operator? Learn Kubernetes networking and security fundamentals using Calico in this free, self-paced certification course.

If you enjoyed this blog then you might also like:

- All you can eat networking session: VPP explained

- Free, online webinars, workshops, and resources

- Learn about Calico Cloud

- Learn about VPP

Join our mailing list

Get updates on blog posts, workshops, certification programs, new releases, and more!