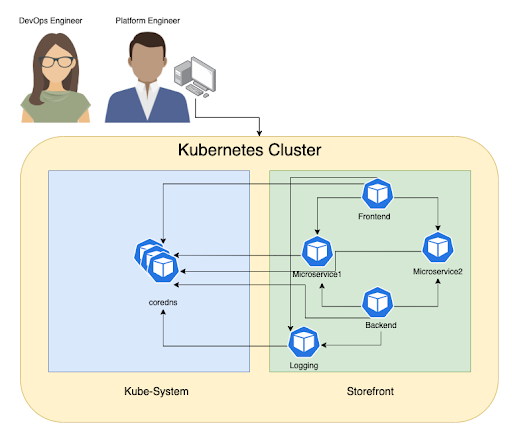

Troubleshooting container connectivity issues and performance hotspots in Kubernetes clusters can be a frustrating exercise in a dynamic environment where hundreds, possibly thousands of pods are continually being created and destroyed. If you are a DevOps or platform engineer and need to troubleshoot microservices and application connectivity issues, or figure out why a service or application is performing slowly, you might use traditional packet capture methods like executing tcpdump against a container in a pod. This might allow you to achieve your task in a siloed single-developer environment, but enterprise-level troubleshooting comes with its own set of mandatory requirements and scale. You don’t want to be slowed down by these requirements, but rather address them in order to shorten the time to resolution.

Dynamic Packet Capture is a Kubernetes-native way that helps you to troubleshoot your microservices and applications quickly and efficiently without granting extra permissions. Let’s look at a specific use case to see some challenges and best practices for live troubleshooting with packet capture in a Kubernetes environment.

Use case: CoreDNS service degradation

Let’s talk about this use case in the context of a hypothetical situation.

Scenario

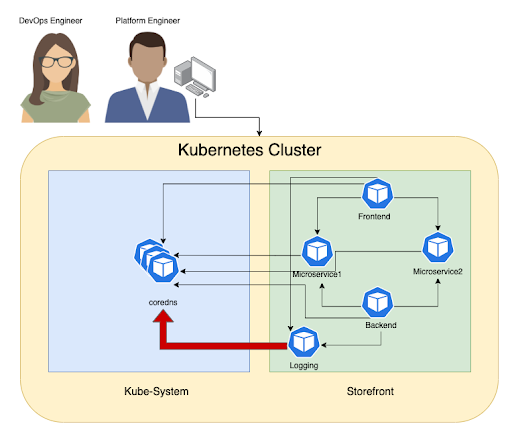

Your organization’s DevOps and platform teams are trying to figure out what’s wrong with DNS service as it has seen DNS service degradation several times during the past few days.

The teams notice that, a few minutes before every outage, there has been a massive amount of requests in addition to packet retransmission coming from the logging pod in the storefront namespace.

Problem observations

The DevOps and platform engineers are presented with the following problems:

- The issue happens overnight when none of the storefront service owners are present to do live troubleshooting

- The DevOps engineer doesn’t have admin privilege to the storefront namespace and cannot run packet capture on this pod

- Alternative is to run tcpdump which is not available on the storefront images, and patching this app to add tcpdump would require further approvals, which are hard to get in a short period of time

- As more customers visit the storefront, pods auto-scale causing new logging pod introduction that requires packet capture

- Due to Kubernetes dynamic nature if the pod is recreated, you need to capture the traffic from the new pod

Desired outcome

The DevOps and platform engineers want to troubleshoot the problem fast with a short time to resolution and minimum number of steps.

- The DevOps engineer needs self-service, on demand access to run a Dynamic Packet Capture job in the storefront namespace, in order to capture the problem on the CoreDNS

- Only the DevOps engineer and the storefront service owner should be able to retrieve and review the captured files

- Additional filtration is required to do specific capture for faster and targeted review, and to avoid running out of space to capture the relevant information

When troubleshooting microservices and applications in Kubernetes with Dynamic Packet Capture, you should consider the following best practices:

- Configure packet capture files to be rotated by size and time

- Filter the captured traffic based on the port and protocol

- Enable a self-service model with RBAC controls to allow teams to troubleshoot workloads within their own namespaces without impacting the rest of the Kubernetes cluster

- Leverage commonly used desktop-based networking troubleshooting tools like Wireshark to analyze data from packet capture

Demo: Addressing the problem using Dynamic Packet Capture

Dynamic Packet Capture is a Kubernetes-native way to capture packets from a specific pod or collection of pods with specified packet sizes and duration, in order to troubleshoot performance hotspots and connectivity issues faster. Dynamic Packet Capture is provided as a custom resource definition in Kubernetes APIs that uses the existing label-based approach to target workloads’ in-network policies, in order to identify single or multiple workload endpoints for capturing live traffic.

The following is a basic example of how to select a single workload:

apiVersion: projectcalico.org/v3 kind: PacketCapture metadata: name: sample-capture-nginx namespace: sample spec: selector: k8s-app == "nginx"

Here is another example of how to select all workload endpoints in a sample namespace:

apiVersion: projectcalico.org/v3 kind: PacketCapture metadata: name: sample-capture-all namespace: sample spec: selector: all()

We will select the app “logging” and specify UDP port 53 in our manifest, as follows:

apiVersion: projectcalico.org/v3 kind: PacketCapture metadata: name: pc-storefront-logging-dns namespace: storefront spec: selector: app == "logging" filters: - protocol: UDP ports: - 53

Using a namespace-based RBAC controller, we can give the service account privileges to run packet capture in the storefront namespace.

apiVersion: rbac.authorization.k8s.io/v1 kind: Role metadata: namespace: storefront name: tigera-packet-capture-role rules: - apiGroups: ["projectcalico.org"] resources: ["packetcaptures"] verbs: ["get", "list", "watch", "create", "update", "patch", "delete"] --- apiVersion: rbac.authorization.k8s.io/v1 kind: RoleBinding metadata: name: tigera-packet-capture-role-devops namespace: storefront subjects: - kind: ServiceAccount name: devops-sa namespace: storefront roleRef: kind: Role name: tigera-packet-capture-role apiGroup: rbac.authorization.k8s.io

At this point, the DevOps engineer has privileges to run packet capture jobs, but can’t retrieve the captured files. If they try to retrieve these files, they should get a 403 HTTP response (the client does not have access rights to the content, so the server should refuse to give the requested resource).

In order to allow the DevOps engineer to access the capture files generated for the storefront namespace, a role/role binding similar to the one below can be used.

cat <<EOF| kubectl apply -f - apiVersion: rbac.authorization.k8s.io/v1 kind: ClusterRole metadata: name: tigera-authentication-clusterrole-devops rules: - apiGroups: ["projectcalico.org"] resources: ["authenticationreviews"] verbs: ["create"] --- apiVersion: rbac.authorization.k8s.io/v1 kind: ClusterRoleBinding metadata: name: tigera-authentication-clusterrolebinding-devops roleRef: apiGroup: rbac.authorization.k8s.io kind: ClusterRole name: tigera-authentication-clusterrole-devops subjects: - kind: ServiceAccount name: devops-sa namespace: storefront --- apiVersion: rbac.authorization.k8s.io/v1 kind: Role metadata: namespace: storefront name: tigera-capture-files-role rules: - apiGroups: ["projectcalico.org"] resources: ["packetcaptures/files"] verbs: ["get"] --- apiVersion: rbac.authorization.k8s.io/v1 kind: RoleBinding metadata: name: tigera-capture-files-role-devops namespace: storefront subjects: - kind: ServiceAccount name: devops-sa namespace: storefront roleRef: kind: Role name: tigera-capture-files-role apiGroup: rbac.authorization.k8s.io EOF

Finally, once the DevOps engineer has the right privileges to retrieve the captured files, they can use the following API to download the pcap files.

# if you already has a load balancer or ingress in your environment, you don't need to do the port forward step

kubectl port-forward -n tigera-manager service/tigera-manager 9443:9443 &

# Update these to match your environment

NS=<REPLACE_WITH_PACKETCAPTURE_NS>

NAME=<REPLACE_WITH_PACKETCAPTURE_NAME>

TOKEN=$(kubectl get secret -n storefront $(kubectl get serviceaccount devops-sa -n storefront -o jsonpath='{range .secrets[*]}{.name}{"\n"}{end}' | grep token) -o go-template='{{.data.token | base64decode}}')

curl "http://localhost:9443/packet-capture/download/$NS/$NAME/files.zip" -L -O -k \

-H "Authorization: Bearer $TOKEN" -vvv

Once the DevOps engineer captures the needed traffic to run their analysis, they can stop the packet capture using the following command:

kubectl delete PacketCapture pc-storefront-logging-dns -n storefront

Conclusion

In most of the incidents when you need to do a packet capture, the problem doesn’t last for a long time and it usually happens randomly. So when it happens, you need to be very fast to capture some useful information in order to find the root cause of the problem. With the dynamic and ephemeral nature of Kubernetes, a Kubernetes-native solution, like Dynamic Packet Capture, is most efficient.

Ready to try Dynamic Packet Capture for yourself? Get started with a free Calico Cloud trial.

Join our mailing list

Get updates on blog posts, workshops, certification programs, new releases, and more!