Project Calico and eBPF

Project Calico has offered a production-ready data plane based on eBPF since September 2020, and it’s been available for technical evaluation for even longer (since February 2020).

The pre-requisites and limitations are simple to review, it’s easy to enable, and it’s easy to validate your configuration. So, there’s never been a better time to start experiencing the benefits!

You do know what those are, don’t you? Don’t worry if not! That’s what this blog post is about. We’ve reached a point where the journey is easy to make, if you know why you want to get there.

Key advantages of using Calico with eBPF

Calico is already the most widely deployed Kubernetes network security solution. What can eBPF do to help our winning formula further? I’ll dive into the details, but let’s look at the highest possible level first.

These three key benefits apply across all supported environments:

- General performance

- Native Kubernetes service handling

- Source IP preservation and Direct Server Return, or DSR

- Each of these benefits is significant and worth discussing in more detail.

Performance

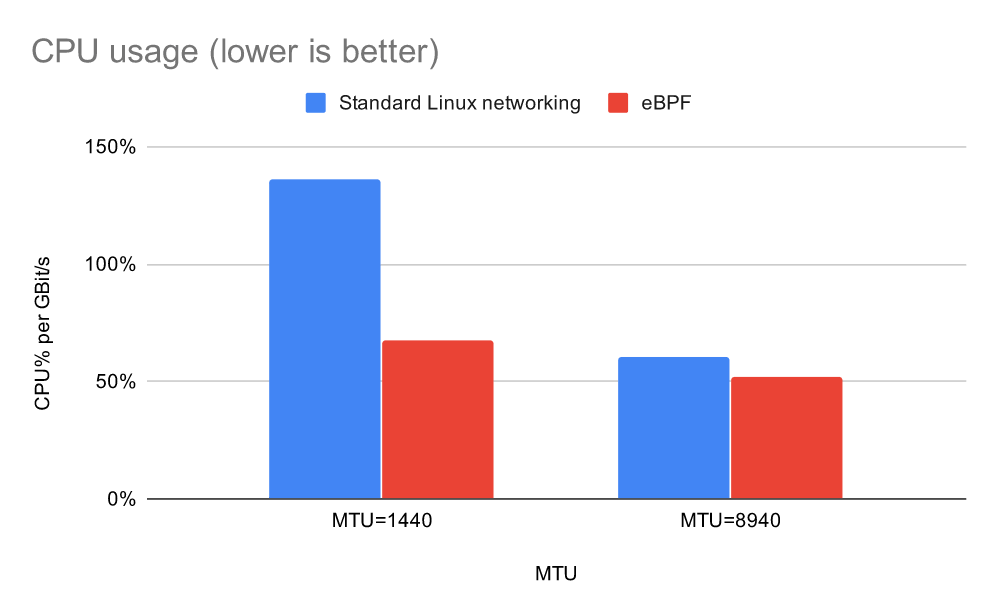

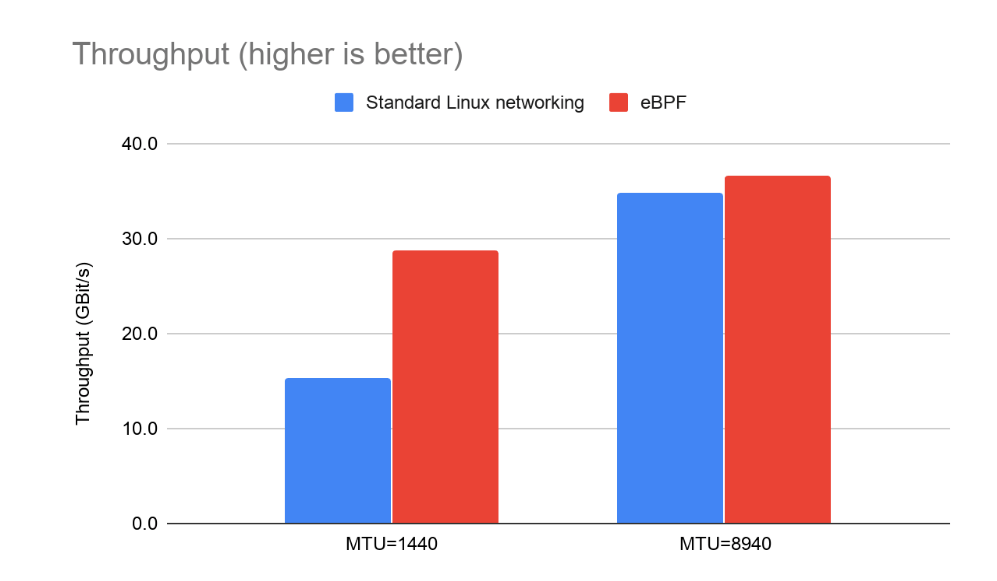

Calico’s eBPF data plane achieves high performance in several ways. Firstly, it achieves higher throughput and/or less CPU per Gigabit of throughput. These two are essentially opposite sides of the same coin. This first benefit is achieved because eBPF programs run in kernel space and they run early in the netfilter packet flow, which results in increased efficiency.

Some graphs are included below to illustrate the gains, but frankly, I encourage you to test on a cluster closely resembling your own setup—the improvement can be significant and it’s great to see it yourself!

As you can see, normalizing the CPU usage per Gigabit, the eBPF data plane uses significantly less CPU per Gigabit than the standard Linux networking data plane. The margin is the biggest with small packet sizes.

For throughput tests, we used qperf to measure throughput between a pair of pods running on different nodes. Since much of the networking overhead is per packet, we tested with both 1440 byte MTU and 8940 byte MTU. The results speak for themselves. However, it’s important not to misinterpret this data as the throughput limit for the node, rather than for a single instance of qperf. With either data plane, you could saturate the 40 Gigabit link if you assigned more CPU for a multi-threaded application or ran more pod instances.

Native Kubernetes service handling

As a general philosophy, maintaining compatibility with upstream Kubernetes components when possible is usually beneficial to the community. Originally, Calico’s eBPF data plane wasn’t planned to replace kube-proxy. However, as development progressed, we found that the optimum eBPF design for Calico’s features wouldn’t be able to work with the existing kube-proxy without introducing significant complexity and reducing overall performance.

Once we were nudged towards replacing kube-proxy, we decided to see how we could improve on the upstream implementation by natively handling Kubernetes services within the Calico data plane.

- Replacing kube-proxy results in:

- Reduced complexity

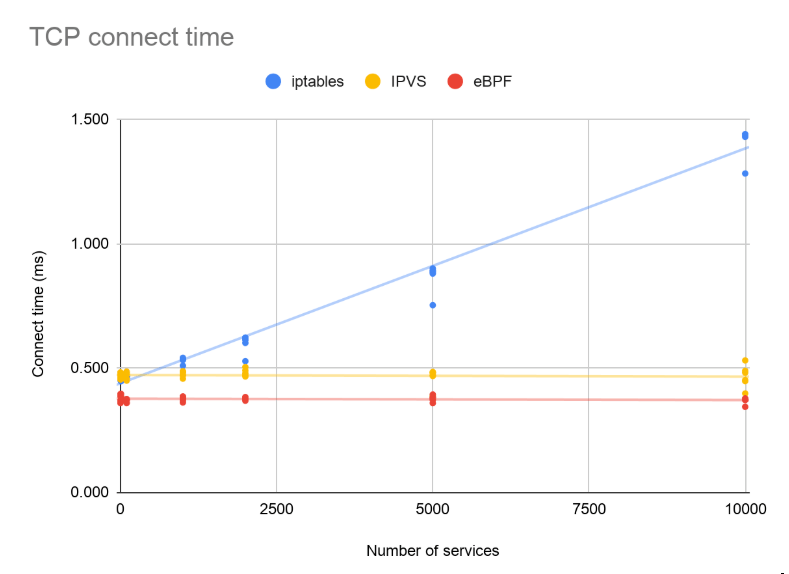

Latency reduction (most noticeable with many short-lived, latency-sensitive connections)

Implementation of service handling in kube-proxy uses a list of rules that grows with the number of services. Hence, its latency gets worse as the number of services increases. Both kube-proxy’s IPVS mode and Calico’s eBPF implementation use an efficient map lookup instead, resulting in a flat performance curve as the number of services increases.

Source IP preservation and Direct Server Return

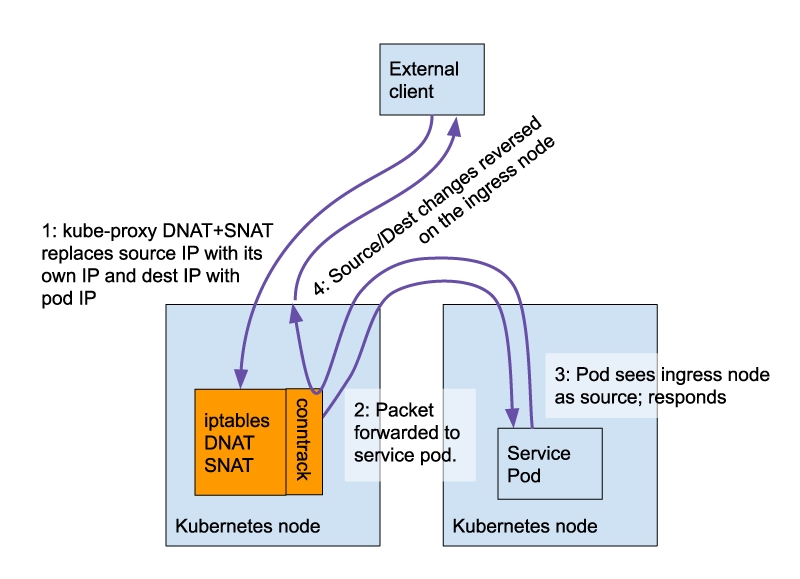

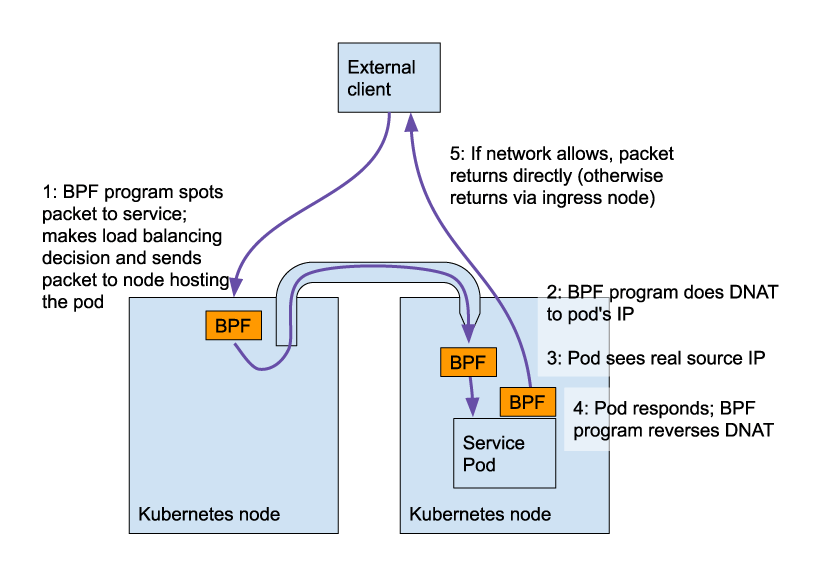

The data path through a cluster with the eBPF data plane enabled is simplified. This is best understood by contrasting visualizations of the flows before and after enabling the eBPF data plane.

Without Calico’s eBPF data plane:

With Calico’s eBPF data plane:

Did you spot the two improvements that the eBPF data plane enables?

- Source IP preservation – The service pod can see the real IP of the user and can act on it or record it appropriately. This is a big benefit because there are many security, compliance and SLA-related reasons why you might want to log or see the real IP of the client on the workload pods.

- Direct server return (DSR) – If the upstream network allows it, the return traffic can go straight out to the user, reducing unnecessary cluster load and reducing undesirable latency.

Ready to try Calico eBPF?

If you feel ready to jump in, the road is clear and there’s no time like the present! Get started with our documentation.

Or, if Calico is working just fine for you and you want to know more before diving into eBPF, tigera offers a series of free Calico courses:

- CCO-L2-EBPF – which is specifically about the eBPF data plane.

- CCO-L1 – about container and Kubernetes networking and security fundamentals.

The eBPF logo by the eBPF Foundation is licensed under CC-BY-4.0

Join our mailing list

Get updates on blog posts, workshops, certification programs, new releases, and more!