In this blog post, we will explore the concept of Kubernetes topology aware routing and how it can enhance network performance for workloads running in Amazon. We will delve into topology aware routing and discuss its benefits in terms of reducing latency and optimizing network traffic flow. In addition, we’ll show you how to minimize the performance impact of overlay networking, using encapsulation only when necessary for communication across availability zones. By doing so, we can enhance network performance by optimizing the utilization of resources based on network topology.

Understanding Topology Aware Routing

Kubernetes clusters are being deployed more often in multi-zone environments. The nodes that make up the cluster are spread across availability zones. If one availability zone is having problems, the nodes in the other availability zones will keep working, and your cluster will continue to provide service for your customers. While this helps to ensure high availability, it also results in increased latency for inter-zone workload communication and can result in inter-zone data transfer costs.

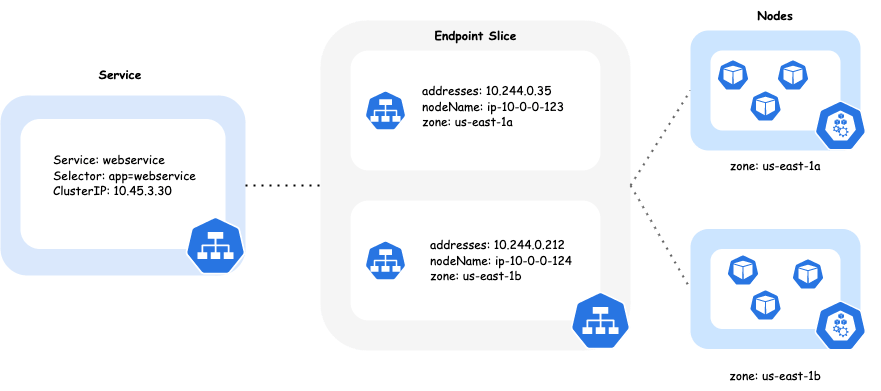

Under normal circumstances, when traffic is directed to a Kubernetes Service, it evenly distributes requests among the pods that support it. Those pods can be spread across nodes in different zones. Topology Aware Routing is a feature that helps direct traffic within the same zone where it was initially generated. When determining the endpoints for a Kubernetes Service, the EndpointSlice controller takes into account the topology, including the region and zone, of each endpoint. It then populates the hints field to assign the endpoint to a specific zone. These hints are utilized to prioritize load balancing towards endpoints that are closer in terms of topology.

Topology Aware Routing is supported for the Calico standard linux dataplane and the Calico eBPF dataplane. When using the eBPF dataplane, Calico manages routing the traffic to endpoints based on hints set by the EndpointSlice controller. When using the standard linux dataplane, this is managed by kube-proxy. So no matter which dataplane you’re using, you can take advantage of the benefits provided by Topology Aware Routing.

Benefits of Topology Aware Routing

- Reduced Latency: One of the key advantages of topology aware routing is its ability to significantly reduce network latency. By selecting paths that minimize the number of hops and traverse high-speed, low-latency links, topology aware routing ensures that traffic reaches its destination with minimal delay. This reduction in latency is particularly crucial for latency-sensitive applications, such as real-time data processing and online gaming, where even small delays can have a significant impact on user experience.

- Optimized Network Traffic Flow: Topology aware routing optimizes network traffic flow by intelligently distributing traffic across available endpoints in the zone from which the traffic originated. By minimizing inter-zone traffic we improve performance and also save money on data transfer costs.

Optimizing for Network Topology

Calico provides support for cross-subnet encapsulation modes for cluster overlay networking. In this mode, when operating within a subnet, the underlying network behaves as a Layer 2 network. Consequently, packets transmitted within a single subnet do not require encapsulation, resulting in the performance advantages of a non-overlay network.

Encapsulating packets involves a minor CPU overhead, and it also increases the packet size due to the inclusion of encapsulation headers like VXLAN or IP-in-IP. This larger packet size limits the maximum size of the inner packet that can be transmitted. Consequently, to transmit the same amount of data, it may be necessary to send more packets.

When Calico’s capability to encapsulate traffic selectively across zones is combined with Kubernetes Topology Aware Routing, it improves the overall network performance, optimizes resource utilization, and results in cost savings in cloud environments.

Solution Overview

Cluster Setup

Provision a cluster that is Topology Aware Routing compliant and satisfies all safeguards. During the deployment of your cluster, make sure to configure the Calico IPPool to utilize VXLANCrossSubnet encapsulation. This configuration can be set either within the Tigera Operator installation resource or the Helm Chart values file. With Calico, you can trust that encapsulation will be used efficiently, only encapsulating packets that traverse subnet boundaries.

Below, you’ll find an example Helm value file for installing an EKS cluster with the Calico eBPF dataplane setup, enabling VXLANCrossSubnet encapsulation.

installation:

kubernetesProvider: "EKS"

cni:

type: Calico

ipam:

type: Calico

calicoNetwork:

linuxDataplane: BPF

hostPorts: Disabled

bgp: Disabled

ipPools:

- cidr: 10.244.0.0/16

encapsulation: VLXANCrossSubnet

Please refer to the installation instructions on how to install Calico eBPF dataplane.

Disable Source Destination Checks

AWS provides a setting for Elastic Network Interfaces (ENIs) called “disable source/destination check.” We disable this setting in order to allow Calico to route pod-to-pod traffic across the AWS network directly, without the need for an overlay network, as long as the pods are within the same VPC subnet.

Calico has the ability to automatically disable source/destination checks for the EKS cluster nodes. To ensure this functionality, it is important to grant the calico-node service account or EKS node-groups the necessary IAM permissions to manage this setting. By applying the settings provided below to the cluster’s FelixConfiguration, Calico takes care of configuring the nodes correctly as the cluster scales automatically.

--- apiVersion: projectcalico.org/v3 kind: FelixConfiguration metadata: name: default spec: awsSrcDstCheck: Disable

Explore Topology Aware Hints

We will first examine the traffic behavior between two Kubernetes workloads without the implementation of topology aware routing. This will allow us to observe the traffic flows before adding in the optimization. The objective is to ensure balanced traffic distribution among all the backing pods, regardless of their location within different availability zones.

For this example we’ve deployed an EKS cluster with ten worker nodes across two availability zones, us-east-1 and us-east-1b, in order to achieve balanced allocation.

$ kubectl get nodes -L topology.kubernetes.io/zone NAME STATUS ROLES AGE VERSION ZONE ip-10-0-161-71.ec2.internal Ready <none> 18h v1.26.4-eks-0a21954 us-east-1a ip-10-0-166-121.ec2.internal Ready <none> 141m v1.26.4-eks-0a21954 us-east-1a ip-10-0-168-243.ec2.internal Ready <none> 141m v1.26.4-eks-0a21954 us-east-1a ip-10-0-172-249.ec2.internal Ready <none> 141m v1.26.4-eks-0a21954 us-east-1a ip-10-0-175-233.ec2.internal Ready <none> 141m v1.26.4-eks-0a21954 us-east-1a ip-10-0-181-178.ec2.internal Ready <none> 18h v1.26.4-eks-0a21954 us-east-1b ip-10-0-182-215.ec2.internal Ready <none> 141m v1.26.4-eks-0a21954 us-east-1b ip-10-0-184-20.ec2.internal Ready <none> 141m v1.26.4-eks-0a21954 us-east-1b ip-10-0-189-213.ec2.internal Ready <none> 141m v1.26.4-eks-0a21954 us-east-1b ip-10-0-190-197.ec2.internal Ready <none> 141m v1.26.4-eks-0a21954 us-east-1b

Let’s scale up the deployments so we have a balanced set of client pods initiating traffic to the server pods spread across the two availability zones.

>>> (~) $ kubectl scale deployment -n topology-aware webclient --replicas=10 deployment.apps/webclient scaled >>> (~) $ kubectl scale deployment -n topology-aware webservice --replicas=10 deployment.apps/webservice scaled >>> (~) $ kubectl get deployments -n topology-aware NAME READY UP-TO-DATE AVAILABLE AGE webclient 10/10 10 10 16h webservice 10/10 10 10 16h

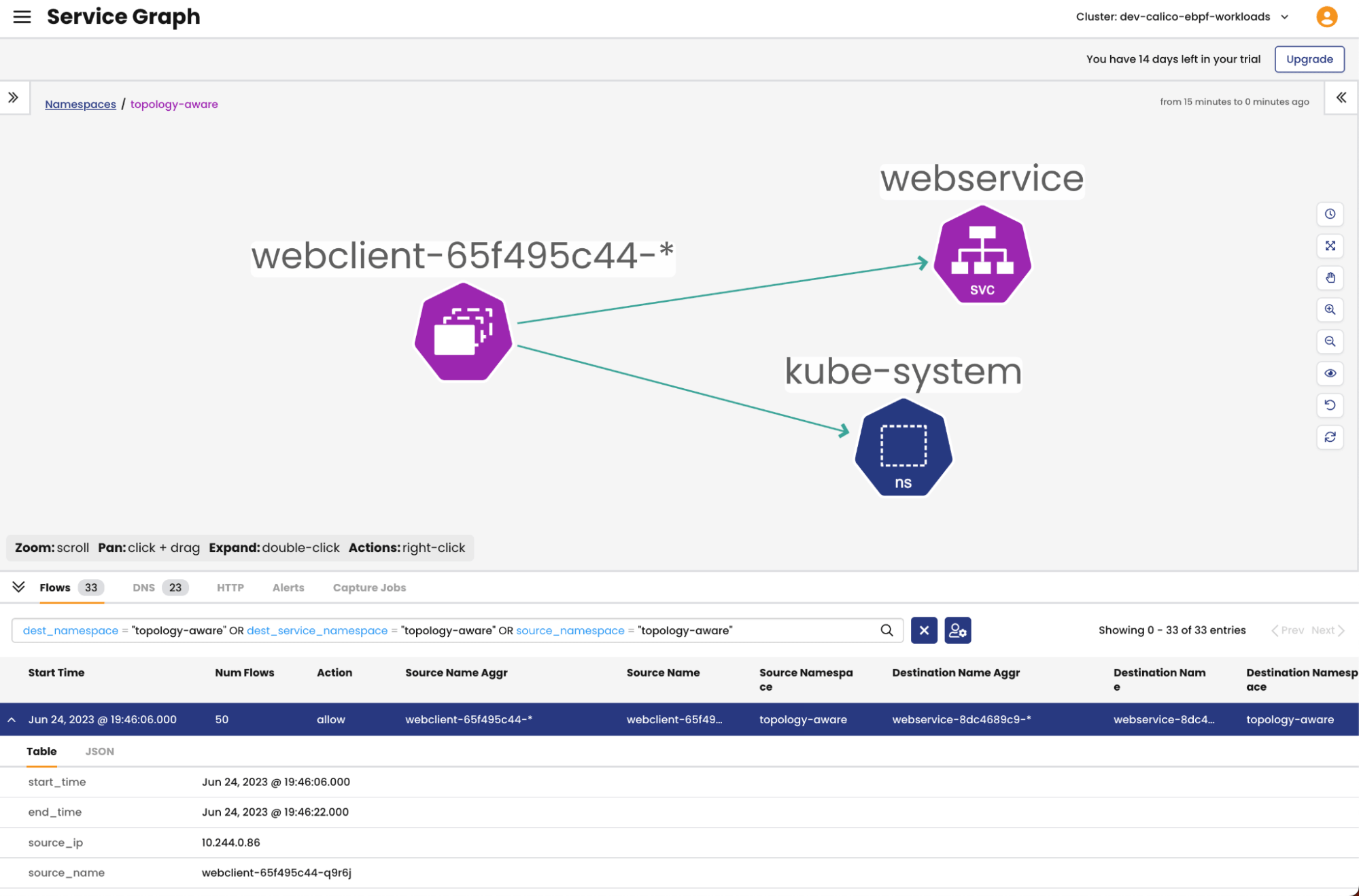

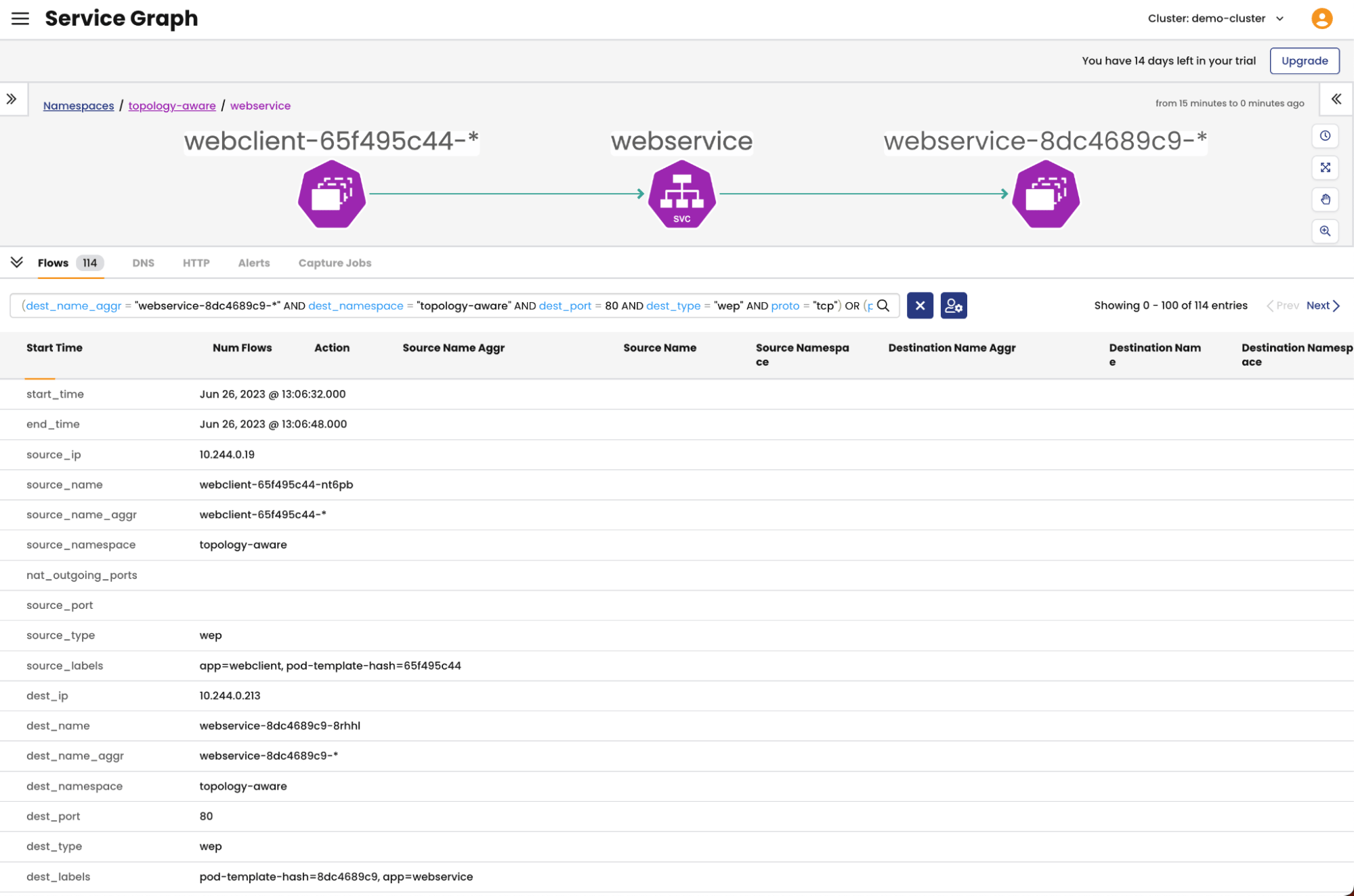

We can observe the traffic flows using the Calico Cloud Dynamic Service graph and flow logs. We’ll see traffic spread across the nodes in both us-east-1a and us-east-1b. We can also tail the logs on the nginx webservice pods to see the requests.

Next, we will proceed to observe the same traffic flows, but this time with the inclusion of topology aware routing. Through this comparison, we will gain a clear understanding of how the introduction of topology aware routing effects traffic flows between the two microservices.

Annotate the webservice service to be topology aware with the following key / value pair service.kubernetes.io/topology-aware-hints: auto.

$ kubectl annotate service -n topology-aware webservice service.kubernetes.io/topology-aware-hints=auto service/webservice annotate

We can verify the webservice service is topology aware by inspecting the endpoint slice resource; there should now be an additional Hints section. If not then the cluster is not Topology Aware thus may have failed one of the safeguards.

$ kubectl get endpointslice -n topology-aware -o yaml | grep hints -A 3 hints: forZones: - name: us-east-1b nodeName: ip-10-0-181-178.ec2.internal -- hints: forZones: - name: us-east-1a nodeName: ip-10-0-166-121.ec2.internal -- hints: forZones: - name: us-east-1a nodeName: ip-10-0-168-243.ec2.internal -- hints: forZones: - name: us-east-1a nodeName: ip-10-0-175-233.ec2.internal -- hints: forZones: - name: us-east-1b nodeName: ip-10-0-182-215.ec2.internal …

Traffic should now be localized to a particular zone. You can verify this by looking through the flow logs shown in the Dynamic Service Graph or by manually initiating connections to the service from each availability zone.

To manually test connectivity and traffic routing within the cluster, you can follow these steps:

- Access a worker node in the us-east-1a zone using a shell or command-line interface.

- Send traffic to the WebService service’s cluster IP address or name. For example, you can use the command: curl webservice.topology-aware.

- Next, access a worker node in the us-east-1b zone using a shell or command-line interface, and repeat the tests.

By executing these commands, you can verify the connectivity and observe how traffic is routed within the cluster based on the specified zones.

$ kubectl exec -it -n topology-aware webclient-65f495c44-cg84z -- bash

locust@webclient-65f495c44-cg84z:~$ curl webservice.topology-aware

<!DOCTYPE html>

<html>

<head>

<title>Welcome to nginx!</title>

<style>

html { color-scheme: light dark; }

body { width: 35em; margin: 0 auto;

font-family: Tahoma, Verdana, Arial, sans-serif; }

</style>

</head>

<body>

<h1>Welcome to nginx!</h1>

<p>If you see this page, the nginx web server is successfully installed and

working. Further configuration is required.</p>

<p>For online documentation and support please refer to

<a href="http://nginx.org/">nginx.org</a>.<br/>

Commercial support is available at

<a href="http://nginx.com/">nginx.com</a>.</p>

<p><em>Thank you for using nginx.</em></p>

</body>

</html>

Tail the customer backing pods to verify traffic routed here to service the request.

$ kubectl logs -n topology-aware webservice-8dc4689c9-4d8p8 -f 10.244.0.158 - - [25/Jun/2023:17:24:04 +0000] "GET / HTTP/1.1" 200 615 "-" "python-urllib3/1.26.15" "-" 10.244.0.207 - - [25/Jun/2023:17:24:04 +0000] "GET / HTTP/1.1" 200 615 "-" "python-urllib3/1.26.15" "-" 10.244.0.5 - - [25/Jun/2023:17:24:04 +0000] "GET / HTTP/1.1" 200 615 "-" "python-urllib3/1.26.15" "-" 10.244.0.160 - - [25/Jun/2023:17:24:04 +0000] "GET / HTTP/1.1" 200 615 "-" "python-urllib3/1.26.15" "-" 10.244.0.207 - - [25/Jun/2023:17:24:04 +0000] "GET / HTTP/1.1" 200 615 "-"

Conclusion

In this blog post, we have explored the benefits of leveraging Calico and topology aware routing to optimize network performance in containerized environments, specifically focusing on Amazon EKS. By implementing topology aware routing, organizations can significantly reduce latency, improve fault tolerance, and optimize network traffic flow within their clusters. Additionally, Calico plays a vital role in enhancing network performance by efficiently managing encapsulation and enabling direct pod-to-pod communication across subnets within the same VPC. By utilizing Calico’s capabilities and configuring the IP pool to use CrossSubnet encapsulation, organizations can achieve optimal traffic distribution and avoid unnecessary encapsulation, further optimizing network utilization. In conclusion, topology aware routing and Calico offer powerful tools to optimize network performance in Amazon EKS clusters. By implementing these technologies, organizations can minimize latency, improve fault tolerance, and maximize network efficiency, ultimately delivering better application performance and enhancing the overall user experience in containerized environments.

Ready to try Calico for yourself? Get started with a free Calico Cloud trial.

Steven Boland contributed to this blog post.

Reference

- Kubernetes – Topology Aware Routing

- Tigera – Everything you need to know about Kubernetes pod networking on AWS

- AWS – Optimize AZ Traffic with Topology Aware Routing

- AWS – Exploring the effect of Topology Aware Hints on network traffic in Amazon Elastic Kubernetes Service

- AWS – Getting visibility into your Amazon EKS Cross-AZ pod to pod network bytes

Join our mailing list

Get updates on blog posts, workshops, certification programs, new releases, and more!