Author’s note: This is a big, gnarly, technical post. I’m not apologising, because I love nothing more than a technical deep dive, but this may not be for you. At the very least, grab a cup of coffee before you get started. Maybe a cookie, too. Treat yourself.

One of the big observable shifts in the software industry over the past few years has been to emphasise concurrency over simple parallelism. This can be seen in part by looking at the meteoric rise of the Go programming language, which has concurrency built into the language at a fundamental level, but it can also been seen by looking at languages like Node.js, which are built around a concurrent but not parallel programming model.

This model is not new, however, and many languages have tooling that can be used to build that kind of model. One of the most popular models is co-operative green threading. In this model, rather than using lots of OS-level threads, with their large memory overhead and comparatively expensive cost to create, lightweight application-level ‘green’ threads are used. Each green thread runs until it yields the flow of control, usually in response to doing some I/O, at which point another green thread is free to run. This co-operative threading model doesn’t necessarily work well when you’re trying to do a ton of CPU-heavy computation, but if you do small bursts of work in response to network I/O it is an excellent way to get efficient use of your CPUs and memory.

Calico components do relatively small amounts of computation but lots of blocking network and syscalls, which makes co-operative green threading the natural model. Because our Felix agent is written in Python, we achieved co-operative concurrency using one of the Python solutions that add this concurrency to Python: specifically, we chose to use gevent.

Gevent: Concurrency for Free.

gevent is enormously popular in the Python community, in part because it is implicitly concurrent. This means that you write your Python code in exactly the way you normally would, and when you do some action that would normally block for I/O, gevent instead interrupts the flow of execution and allows other green threads to run.

For example, the following code shows a function that writes a few messages to a TCP socket in gevent:

def write_messages(socket):

# When the next line is executed, this function will yield the flow of

# control and let other code execute. When the write is complete, the

# function will resume executing from the statement after.

socket.send(b’first message’) # Yields

socket.send(b’second message’) # Yields

some_cpu_bound_function() # Doesn’t yield

socket.send(b’third message’) # Yields

At each socket send event, gevent implicitly takes over and forces the function to freeze its execution. Other code can then run.

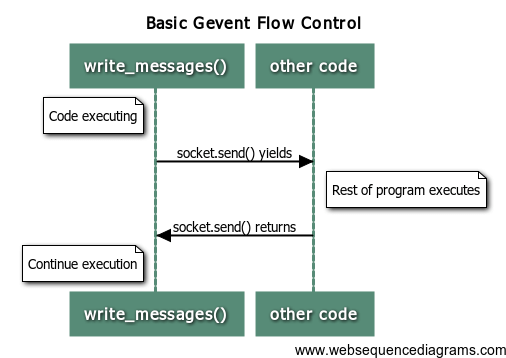

The flow of control can be seen below:

Compare that to the equivalent code using Twisted:

@inlineCallbacks

def writeMessages(transport):

# This function yields the flow of control at each ‘yield’ statement,

# but otherwise functions the same as the gevent code.

yield transport.write(b’first message’) # Yields

yield transport.write(b’second message’) # Yields

some_cpu_bound_function() # Doesn’t yield

yield transport.write(b’third message’) # Yields

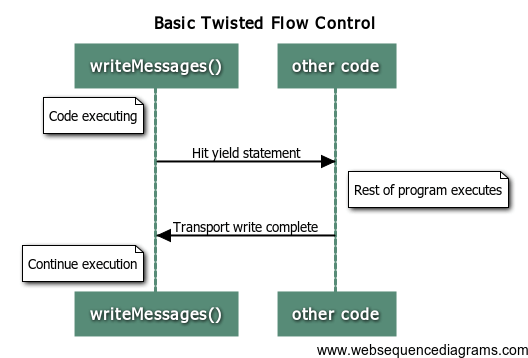

Ignoring the differences in coding style (which are covered here), the primary difference is the ‘yield’ statement in Twisted. This forms an explicit indication that the flow of execution will block at this point. This means, however, that code that was previously blocking cannot automatically become non-blocking: you need to fill it full of ‘yield’ statements. The similarity can be seen in the picture below. The effect of the code is the same, it’s only different in terminology:

With gevent, you can write code that appears very similar to ordinary Python code and expect it to be automatically concurrent. More importantly, you can use standard blocking Python libraries that do I/O (like requests, for example), and have them automatically become concurrent without any changes to the library being required. This makes it easy to take advantage of all of the goodness of the Python ecosystem, while also making efficient use of your CPU cycles and memory while increasing throughput.

This freedom makes gevent extremely flexible, but it comes with some downsides. The most important one is that, even though your code looks single-threaded, you need to remember that any function call or property access may cause your thread of execution to yield to the gevent event loop. This can break certain kinds of invariants if you aren’t careful. Essentially, this means that you cannot write your code as though it isn’t concurrent. Worse, it means you need to examine code you would previously have thought of as safe to guarantee that it actually is.

Nothing Comes for Free

The Calico team managed to encounter an extremely subtle problem arising from exactly this requirement. All of our code was written with concurrency in mind, and we are extremely careful to ensure that we can safely yield the flow of execution whenever we perform any kind of network activity or other I/O (such as subprocess communication). To help with this we wrote Felix in a gevent-based form of the actor model, which allows us to effectively treat certain method invocations as critical sections where state cannot be changed under our feet. Each actor runs in its own green thread (‘greenlet’ in gevent terms), but never in more than one, ensuring that data access cannot race with itself.

The problem with this actor model is that we need to make sure we handle exceptions properly. In particular, we want to make sure that exceptions that are truly unexpected are logged and then that the Felix process terminates, allowing the init system to restart us so we can rebuild our state.

To do this, we have the main greenlet hold a weak reference to all the other actor objects. This weak reference has an associated callback that will fire when the actor object is garbage collected, allowing us one final chance to introspect the state of the object. We use this opportunity to check whether an exception took out the actor, and if it did to log it out and then crash with an appropriate message.

Can you spot the problem here? We certainly didn’t.

How Well Do You Know Your Garbage Collector?

The actual bug is several levels deep, but relies on knowing a few quite deep things about the Python runtime and libraries.

The first thing is that gevent, while fairly magic, does not know anything about Python’s garbage collector. In particular, when CPython’s stop-the-world garbage collector runs, the asnychronous flow of control previously being managed by gevent is interrupted. In essence, gevent is paused, unaware, while the garbage collector executes. While the stop-the-world collector is running, gevent will believe the previously-executing greenlet is the active one.

The second thing is that the weak reference callback is called by the garbage collector itself. It runs in the same interrupted context that the garbage collector runs on. This means it runs as part of the same greenlet as the one the garbage collector interrupted. This makes the weak reference callback roughly equivalent to a finalizer.

The third thing is that, in CPython’s standard logging framework, log handlers can run arbitrary code. This can include network I/O, especially when using something like syslog.

And finally, the note that ties it all together: gevent monkeypatches the standard library to force a gevent context switch whenever network I/O is attempted.

So the bug is this: when an actor was cleaned up in this way, we’d attempt to log out the actor destruction at debug level. Most of this time this would be a non-issue, as either debug level logging wouldn’t be turned on, or syslog wouldn’t be attached to the logger.

However, if debug-level logging were turned on for syslog, we’d attempt to write the log. This would cause the syslog handler to attempt to write to a socket. At this point, gevent would happily try to yield the flow of control. Gevent will switch to another waiting greenlet, and suddenly your garbage collection has been interrupted by gevent.

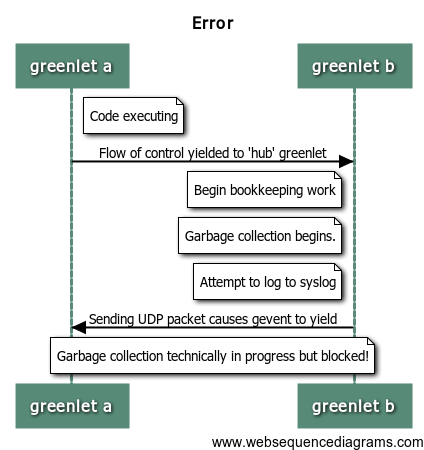

This flow of control can be seen below:

Unsurprisingly, at this point everything goes just a little bit wrong. It’s hard to work out exactly what will happen, but it’s entirely possible that this situation would go undetected, and garbage collection would never complete, or at least not until gevent schedules the affected greenlet again. More importantly, you’d have no way to know whether your running system is affected by this problem.

We only detected this because we hit one more unlikely complication: when the garbage collector interrupted, the gevent hub greenlet happened to be running. This greenlet is used by gevent itself to manage the runtime, and it runs a tiny fraction of the time. However, it is forbidden for this greenlet to perform IO, and gevent enforces this limitation. As a result, if the hub greenlet is interrupted by the garbage collector and you attempt to yield, just like we did, gevent will throw an exception. This gives you a ghost of a chance to know you’re affected.

Take Away

So what are the take aways from this? First, that gevent isn’t free: you need to be aware you’re writing for it. It can come back to bite you in really subtle ways that trip up even extremely experienced programmers. Second, that weak reference callbacks are run inside the garbage collector, and should be thought of as being exactly the same as finalizers. That leads to the third point: don’t do I/O in finalizers. Just don’t do it. Even in synchronous systems, don’t do it.

Happily for us, the fix was easy: just don’t log from that function. If we’re going to blow up, print the traceback to stderr, and let the init system catch it. Otherwise, just quietly let the finalizer pass. All is well again.

Join our mailing list

Get updates on blog posts, workshops, certification programs, new releases, and more!